Executive summary:

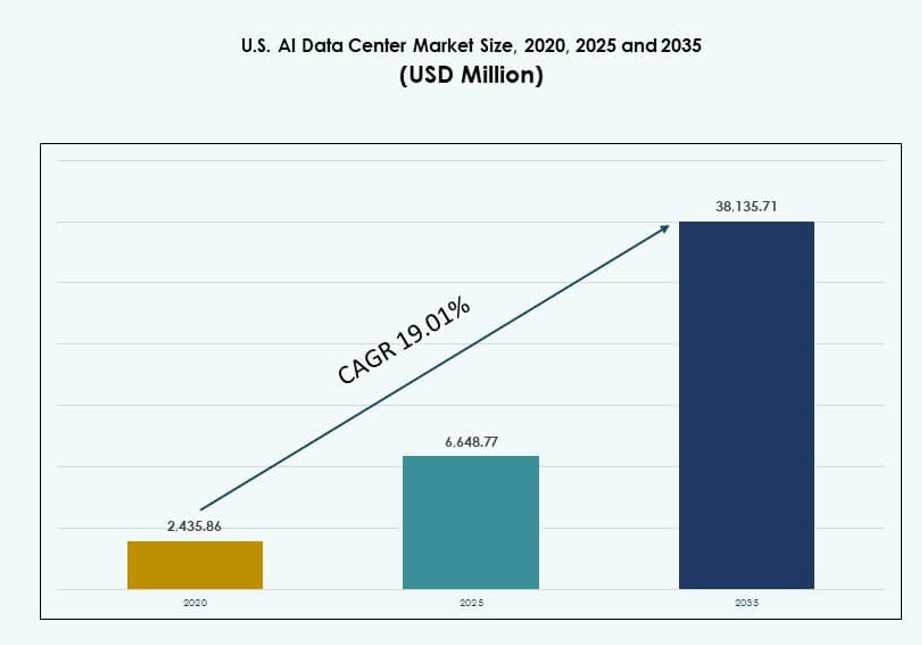

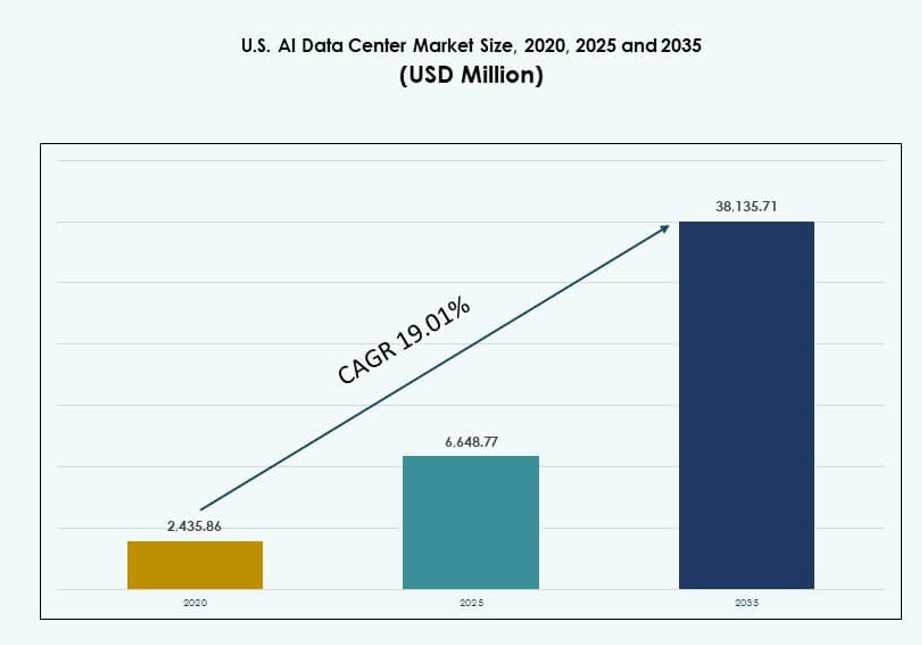

The U.S. AI Data Center Market size was valued at USD 2,435.86 million in 2020 to USD 6,648.77 million in 2025 and is anticipated to reach USD 38,135.71 million by 2035, at a CAGR of 19.01% during the forecast period.

| REPORT ATTRIBUTE |

DETAILS |

| Historical Period |

2020-2023 |

| Base Year |

2024 |

| Forecast Period |

2025-2035 |

| U.S. AI Data Center Market Size 2025 |

USD 6,648.77 Million |

| U.S. AI Data Center Market, CAGR |

19.01% |

| U.S. AI Data Center Market Size 2035 |

USD 38,135.71 Million |

The market is rapidly evolving as AI workloads reshape compute, networking, and cooling demands. Hyperscalers deploy AI training clusters with liquid-cooled racks exceeding 30 kW per cabinet. Cloud-native and enterprise users seek optimized infrastructure for LLMs, vision models, and real-time inference. Businesses invest in scalable, energy-efficient data center platforms to maintain competitiveness. Infrastructure providers tailor facilities for AI resilience and low latency. AI adoption accelerates facility redesigns, orchestration tools, and GPU-integrated rack architectures. The U.S. AI Data Center Market provides strategic growth opportunities across sectors seeking AI-driven transformation.

Northern Virginia leads the market with the largest capacity, driven by fiber access, low power costs, and hyperscale campuses. Silicon Valley and Dallas-Fort Worth follow due to high enterprise demand and established ecosystems. Emerging zones like Phoenix, Atlanta, and Columbus gain traction with land availability and favorable permitting. Edge growth appears across states like North Carolina and Missouri. These regions attract deployments for AI inference and sovereign workloads. The U.S. AI Data Center Market reflects both regional concentration and increasing diversification across metro and sub-metro zones.

Market Dynamics:

Market Drivers

Surging AI Model Complexity Requires High-Density Compute Infrastructure at Scale

Large language models, image generators, and real-time recommendation engines demand dense compute clusters. Operators scale up power-dense racks to accommodate GPUs and AI accelerators, with deployments often exceeding 30 kW per rack. Liquid cooling becomes essential in maintaining thermal thresholds across hyperscale environments. The U.S. AI Data Center Market benefits from ongoing upgrades in thermal and power design. GPU-accelerated workloads push facilities to reconfigure rack layouts. Custom silicon and inference optimization reshape workload distribution. The market supports growing cloud-native and edge AI ecosystems. It anchors innovation across enterprise, academic, and government sectors. Businesses invest to secure low-latency AI capacity closer to users.

- For instance, NVIDIA’s DGX H100 systems with 8x H100 GPUs deliver 32 petaFLOPS FP8 performance at 10.2 kW max system power. Liquid cooling becomes essential in maintaining thermal thresholds across hyperscale environments.

Government Incentives and National AI Infrastructure Plans Support Data Center Expansion

Federal and state programs fund AI innovation and infrastructure with multibillion-dollar incentives. Investment targets include data center development, energy efficiency, and AI model training. Public-private partnerships strengthen regional AI ecosystems. The U.S. AI Data Center Market aligns with national strategies on AI leadership and compute sovereignty. Tax credits and power grid access streamline hyperscale deployments. Public cloud operators coordinate with local regulators on AI facility siting. High-performance computing clusters enable national research initiatives. AI factories and GPU cloud zones emerge as strategic assets. The market sees capital inflows from sovereign and institutional investors.

- For instance, the U.S. CHIPS and Science Act of 2022 allocated $52.7 billion total, including $39 billion for semiconductor manufacturing facilities critical to AI compute chips.

Hyperscaler-Led Investment Surge Across Tier I and Emerging Data Center Markets

Leading hyperscalers dominate large-scale AI data center builds, often exceeding 100 MW per campus. AI workloads drive demand for modular and scalable rack systems. The U.S. AI Data Center Market tracks record land acquisitions and record-setting investment rounds. Expansion moves beyond Northern Virginia into Columbus, Atlanta, and Phoenix. Rack vendors partner with cloud platforms to meet density and cooling needs. Infrastructure-as-a-service models include AI-optimized capacity. Growth continues in metro zones with strong connectivity and renewable energy. Firms prioritize regions offering grid stability and water-efficient cooling. Enterprise adoption of AI-as-a-service drives downstream infrastructure buildouts.

Rapid Growth of AI Startups and Vertical AI Drives Edge and Colocation Demand

AI-native startups, healthcare AI platforms, and fintech models require scalable compute infrastructure. These firms choose colocation and edge deployments for cost efficiency and speed to market. The U.S. AI Data Center Market responds with low-latency, high-availability zones tailored for AI inference. Rack configurations shift toward edge GPU nodes and distributed AI fabrics. Telecom operators enable 5G-based edge AI deployments across cities. Financial institutions and autonomous vehicle companies scale localized AI workloads. Startups seek proximity to data streams and user nodes. Service providers build flexible rack environments for AI-specific tenants. Private equity flows into edge-focused operators to capture demand.

Market Trends

Shift Toward Liquid-Cooled and Hybrid Cooling Designs to Manage Rising Rack Densities

Thermal design evolves across data centers due to increasing power and AI-specific workloads. Operators integrate direct-to-chip and rear-door heat exchangers to cool high-density racks. The U.S. AI Data Center Market leads global adoption of immersion and hybrid cooling methods. Rack-level design now supports more than 50 kW per node. Vendors redesign airflow and containment systems for thermal efficiency. Facility upgrades include cold plate retrofits and liquid-distribution units. Operators prioritize water efficiency and PUE under 1.2. Facilities benchmark cooling innovations for AI zones. Cooling has become a key differentiator in AI-optimized data center builds.

Rising Deployment of AI Factory Zones by Cloud Providers and Semiconductor Partners

Cloud hyperscalers collaborate with chipmakers to deploy AI-dedicated clusters in zonal patterns. These AI factory zones house thousands of GPU servers for training and inference. The U.S. AI Data Center Market supports such architectures with specialized racks and power topologies. Clusters are optimized for multi-tenant, multi-GPU workloads. Rack design integrates high-speed NVLink, NVMe-oF, and PCIe Gen5 interfaces. Vendors enable dynamic workload reallocation through AI orchestration layers. Fabric interconnects support petabyte-scale data movement between nodes. AI zones often operate in blackout-isolated segments to ensure reliability. Facilities adopt tiered security and redundancy across rack-level assets.

Convergence of AI and HPC Drives Adoption of AI-Optimized Interconnects and Orchestration

AI workloads share resource needs with scientific computing and simulation tasks. HPC vendors now offer AI-optimized racks with tightly coupled memory and compute. The U.S. AI Data Center Market embraces this convergence through joint architecture designs. Operators deploy InfiniBand, CXL, and RDMA-enabled networks across rack clusters. Rack layouts support distributed model parallelism and mixed precision compute. Software orchestration tools balance utilization across GPUs and CPUs. AI workloads benefit from low-latency data pipelines and real-time scheduling. Data centers adopt programmable fabrics to enable flexible AI workflows. Infrastructure providers invest in co-designed AI-HPC environments.

Rise of Sovereign AI Requirements and State-Level Data Compliance Accelerates Localized Builds

Governments and regulated industries demand AI data sovereignty for compliance and security. Enterprises seek localized rack deployments in government-certified facilities. The U.S. AI Data Center Market adapts through sovereign cloud regions and state-specific AI zones. Operators implement zero-trust architectures across rack clusters. Certification standards include FedRAMP, HIPAA, and CJIS compliance. Rack vendors offer tamper-evident and access-controlled configurations. Localized deployments help financial and healthcare firms meet jurisdictional policies. States support in-region builds with land and power incentives. Sovereign AI needs reshape rack procurement strategies and edge planning.

Market Challenges

Power Grid Constraints, Permitting Delays, and Rising Energy Costs Affect Capacity Expansion

Growing AI compute needs strain power availability in top-tier data center regions. Utility upgrades face long lead times, limiting rapid scaling. The U.S. AI Data Center Market contends with grid congestion in Northern Virginia, Dallas, and Silicon Valley. New projects often wait months for interconnection approvals. Rising energy prices affect total cost of AI workloads. Operators invest in on-site generation, battery storage, and utility partnerships. Permitting timelines vary by state and jurisdiction. Delays impact deployment of high-density racks and liquid-cooled systems. Investors must assess location-specific risks tied to energy and permitting access.

Supply Chain Risks, Rack Hardware Shortages, and Talent Gaps Disrupt Deployment Timelines

Rack vendors face intermittent delays in delivering high-density enclosures and thermal components. Lead times for AI-grade GPUs and cooling units remain volatile. The U.S. AI Data Center Market experiences constraints in workforce availability for integration, HVAC, and controls. Supply issues affect consistency in rack specification and testing. Staffing challenges extend deployment and onboarding periods. Specialized roles in AI facility design and orchestration remain hard to fill. Vendors struggle to scale support for custom rack requests. Operators explore prefabricated rack modules to reduce risk. Talent and hardware gaps limit rapid AI cluster expansion.

Market Opportunities

Growing Cross-Industry Adoption of AI Applications Creates Broad-Based Infrastructure Demand

AI models are now embedded across sectors from healthcare and retail to media and manufacturing. Firms seek scalable infrastructure to handle new AI workflows. The U.S. AI Data Center Market benefits from this shift through sector-specific rack demand. Healthcare and financial services drive need for secure, localized capacity. Retail and automotive prioritize edge inferencing nodes. This diversity expands the total addressable market for rack vendors and service providers.

Inflow of Private Capital and Real Estate Investment Trusts Supports Greenfield AI Builds

Private equity and REITs target AI infrastructure as a long-term asset class. New campuses feature AI-ready rack zones and low-latency fiber paths. The U.S. AI Data Center Market gains from joint ventures between hyperscalers and infrastructure funds. Strategic partnerships fast-track buildouts across secondary markets. Investors prioritize power procurement and land banking for future AI clusters.

Market Segmentation

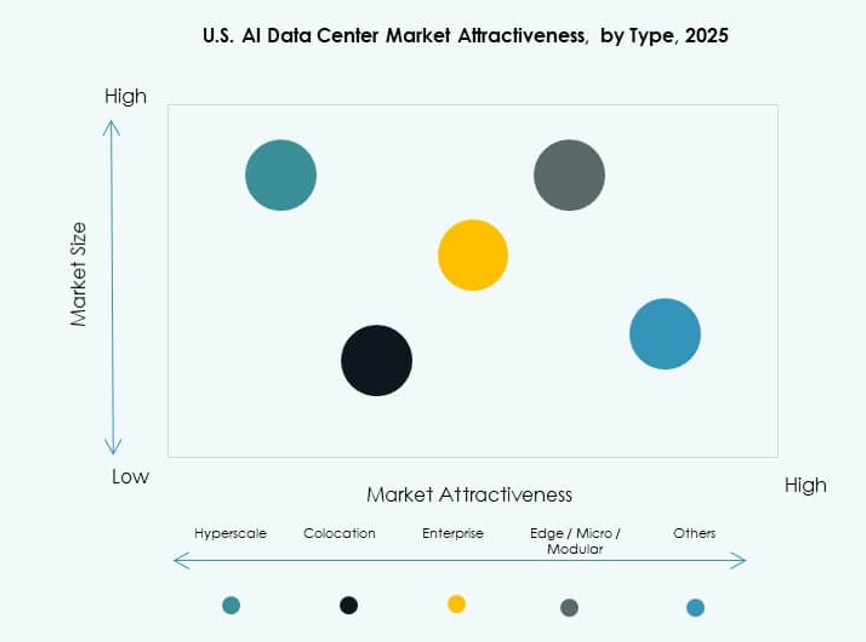

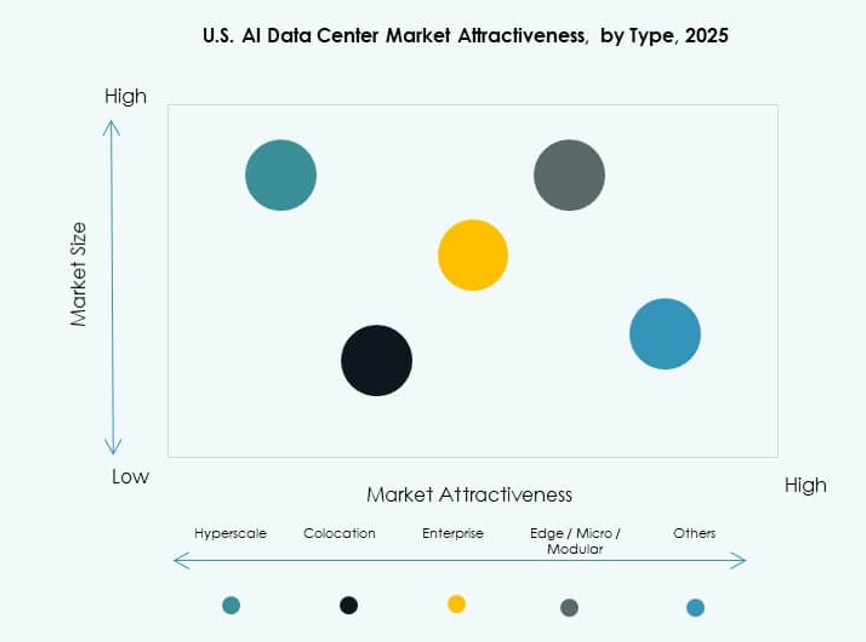

By Type

Hyperscale dominates the U.S. AI Data Center Market due to growing cloud AI workloads and dedicated training clusters. These facilities house high-density racks with AI accelerators and liquid-cooling systems. Colocation and enterprise segments grow through demand for shared infrastructure from startups and regulated industries. Edge and micro data centers rise in importance for real-time inference near users. Hyperscale holds the largest market share due to resource concentration and investment scale.

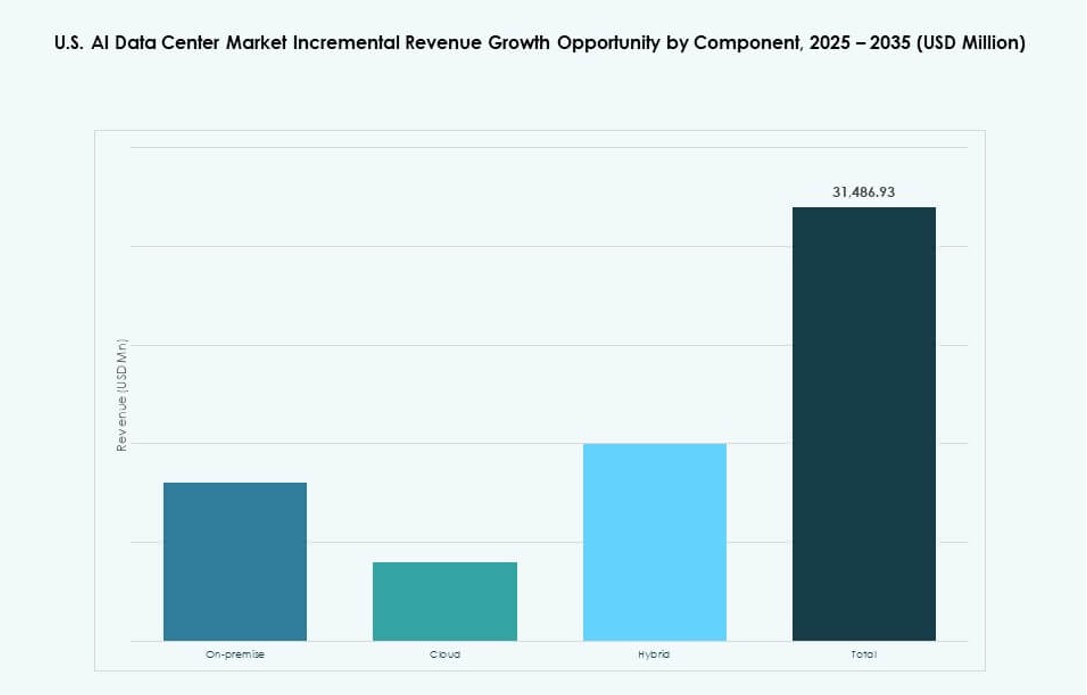

By Component

Hardware leads the component segment, driven by the need for AI-optimized racks, GPUs, and cooling systems. Software and orchestration platforms enable workload scheduling, GPU management, and energy optimization. Services contribute significantly through design, retrofitting, and thermal consulting. The U.S. AI Data Center Market sees hardware retaining the dominant share, supported by continuous upgrades for power and density.

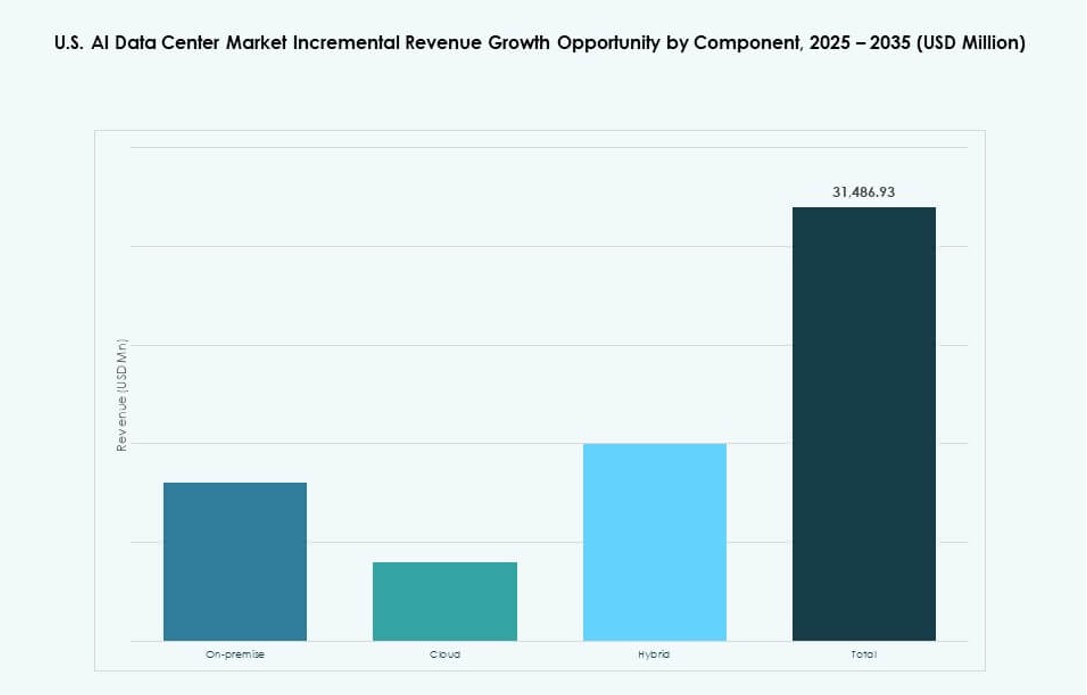

By Deployment

Cloud deployment dominates due to its scalability, lower capex, and access to hyperscaler ecosystems. Hybrid deployments gain traction among enterprises balancing latency, security, and control. On-premise solutions shrink slightly as firms shift AI workloads to specialized providers. The U.S. AI Data Center Market reflects strong cloud expansion, especially among financial, retail, and GenAI firms.

By Application

Generative AI leads application demand, driving requirements for training clusters and memory-optimized racks. Machine learning and computer vision also contribute substantially, especially in logistics, automotive, and healthcare. NLP and other categories scale in chatbots, voice, and document processing. The U.S. AI Data Center Market records GenAI as the fastest-growing segment.

By Vertical

IT and telecom dominate vertical demand, followed by BFSI and healthcare. These sectors need AI infrastructure for model deployment, fraud detection, and diagnostics. Retail, media, and automotive sectors invest in inferencing capacity at scale. Manufacturing adopts AI for quality control and automation. The U.S. AI Data Center Market sees IT and telecom holding the largest share due to hyperscaler expansion.

Regional Insights

Northern Virginia, Silicon Valley, and Dallas-Fort Worth Maintain Dominance with Over 60% Share

Northern Virginia holds the largest share of the U.S. AI Data Center Market, exceeding 35% due to hyperscale footprints and fiber access. Silicon Valley and Dallas-Fort Worth follow, driven by enterprise presence and network density. These regions support large AI zones with redundant power and scalable land. Operators deploy thousands of high-density racks in these metros. They lead due to existing infrastructure and regulatory familiarity. Market activity remains centered around Ashburn, Santa Clara, and Plano.

- For instance, Northern Virginia’s data center inventory reached 2,930.1 MW in 2024, representing the largest market with 451.7 MW net absorption and just 0.48% vacancy.

Phoenix, Columbus, and Atlanta Emerge with 25% Share Due to Power Access and Lower Costs

Secondary metros like Phoenix and Columbus attract hyperscaler investment through abundant land and power. Atlanta benefits from connectivity, favorable permitting, and low disaster risk. These regions support greenfield AI builds with modular rack designs. The U.S. AI Data Center Market expands into these zones to meet rising GenAI demand. Rack density and power availability drive site selection. Investors prioritize these locations for long-term AI infrastructure scaling.

- For instance, Atlanta saw the highest net absorption in 2024, surpassing Northern Virginia, with under-construction capacity surging 195% year-over-year.

Regional Edge Zones Across Midwest and Southeast Account for 15% Market Share

Localized AI workloads push demand into edge nodes across underserved metros. Telecom and content players deploy racks in mid-sized cities to reduce latency. The U.S. AI Data Center Market sees edge growth in Tennessee, Missouri, and North Carolina. These zones support AI inferencing in smart manufacturing, logistics, and healthcare. Rack deployments remain smaller but strategically vital. Market share continues to shift toward regional diversity for AI delivery.

Competitive Insights:

- Amazon Web Services (AWS)

- Microsoft (Azure)

- Google Cloud (Alphabet)

- Meta Platforms

- NVIDIA

- STACK Infrastructure

- Vantage Data Centers

- Equinix

- Digital Realty Trust

- CoreWeave

The competitive landscape of the U.S. AI Data Center Market is defined by hyperscalers, cloud service providers, chipmakers, and colocation operators. AWS, Microsoft, and Google lead in AI-specific infrastructure rollouts, investing in multi-region clusters and liquid-cooled rack zones. Meta and NVIDIA drive innovation in rack density and GPU-powered compute. STACK, Vantage, and Digital Realty expand AI-ready campuses across Tier I and Tier II metros. These firms compete on latency, power availability, and custom rack configurations. Specialized players like CoreWeave offer GPU-as-a-service at scale, disrupting traditional deployment models. The U.S. AI Data Center Market remains highly capital-intensive, where leadership depends on energy procurement, land access, and rapid AI workload integration.

Recent Developments:

- In January 2026, OpenAI partnered with SB Energy, a SoftBank Group company, to build and operate a 1.2 GW AI data center in Milam County, Texas, with OpenAI and SoftBank each investing $500 million to support Stargate’s AI infrastructure expansion.

- In January 2026, NVIDIA announced the Rubin platform, a next-generation AI supercomputer system set for deployment by U.S. cloud providers like Microsoft Azure, AWS, Google Cloud, and CoreWeave in data centers starting mid-2026.

- In October 2025, a consortium including BlackRock, NVIDIA, Microsoft, and xAI agreed to acquire Aligned Data Centers for $40 billion, providing 5 GW of AI-ready capacity across U.S. sites with closure expected in early 2026.

- In January 2024, STACK Infrastructure expanded its AI-ready data center capabilities enhancing support for high-density AI and machine learning workloads through customizable designs and campuses in key markets.